Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Achieve AI sovereignty and eliminate subscription fees. Practical tutorials on running DeepSeek R1 and Ollama locally, featuring hardware benchmarks and VRAM optimization for private LLM deployment.

Quick Answer: A local AI privacy audit is a repeatable way to prove no sensitive data leaves your network — using a baseline packet capture (PCAP), egress logs, and process attribution. In this guide, you’ll build a lightweight “Evidence Pack”…

Quick Answer (2026): The Tesla P40 is worth it only if you need 24GB VRAM on a tiny budget for batch / offline local inference—and you’re okay with DIY cooling + older software stacks. If you need plug-and-play or low-latency…

If you’re shopping for a “real 30B local LLM box” in 2026, the internet will mislead you fast: most benchmarks are either 8B-speed screenshots or cloud-grade claims that ignore what actually breaks on your desk — memory headroom + the…

Quick Answer: Yes—Mac Mini M4 Pro 24GB can be a strong local LLM server for 2–5 person teams, but only if your stack is standardized (mostly 4B–8B quantized models), concurrency is controlled, and long-context workloads are scheduled with discipline. If…

1. The Mac Mini M4 16GB: Your Solo LLM Workhorse Decision Guide Quick Answer: Yes—the Mac Mini M4 16GB is a strong local LLM machine in 2026 for 7B–8B quantized models. The real limit is not just model size, but…

Quick Verdict & Strategic Insights The Bottom Line: For production or externally exposed codebases where a single critical defect can trigger expensive remediation, audits, and incident response, OpenAI o1-preview is the safer default. DeepSeek R1’s low token price can be…

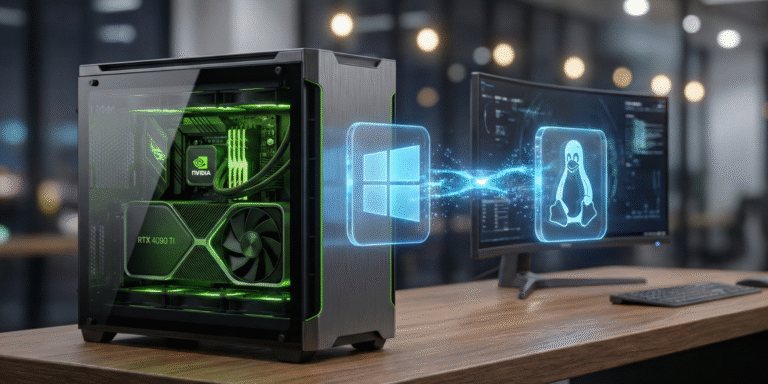

Quick Verdict & Strategic Insights The Bottom Line: A fully error-proof LocalAI Windows WSL2 NVIDIA GPU setup is achievable for under $2,000 hardware investment—with verified 35–45 tokens/sec performance (RTX 4070+), cloud cost savings beyond $240/user/year, and zero recurring API fees,…

Quick Verdict & Strategic Insights Conditional Verdict: On single consumer GPUs (8–16GB VRAM), Ollama usually wins for fast deployment and low operational friction. vLLM can outperform when your workload is truly concurrency-heavy (multiple simultaneous requests, API-first pipelines) and that throughput…

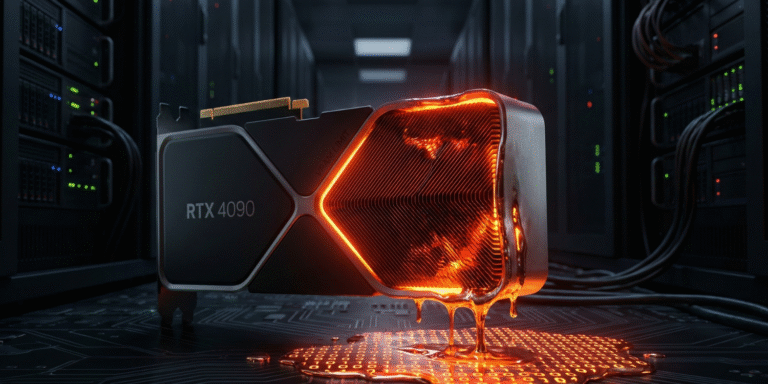

🚀 Quick Answer: 12GB VRAM is insufficient for 30B+ local LLMs by 2026; upgrade to 24GB for future-proofing The Verdict: 12GB VRAM will bottleneck 30B-parameter models under realistic context and usage scenarios by 2026. Core Advantage: 24GB+ VRAM enables stable…

🚀 Quick Answer: The M4 Mac Mini Pro is a solid investment for mid-to-high local AI workloads with DeepSeek R1, balancing performance and cost. The Verdict: The 64GB M4 Pro delivers 11–14 tokens/sec at 4-bit quantization enabling feasible local 32B…